The Three Types of LLM-Powered Apps

There has been an explosion of LLM-powered apps in 2023. Enough that we can now categorize them into three different buckets: LLM plus prompt, LLM plus data, LLM plus product.

These three build on each other, in that order, and they become much more defensible as they go. The first class of apps (LLM plus prompt) can be built alone, but LLM plus product apps necessitate the other two and are much harder for a competitor to combat.

LLM Plus Prompt

This class is the simplest to build, and so was the one that companies who just had to have anything "ChatGPT" rushed out. It's most often a chat interface, although it can also be a command-and-response interface as well, and is paired with some prompt engineering.

While there can be some light data connections (e.g., to search or to a database), the apps really are just a custom prompt to communicate with an existing LLM, perhaps with a framework to prevent jailbreaking.

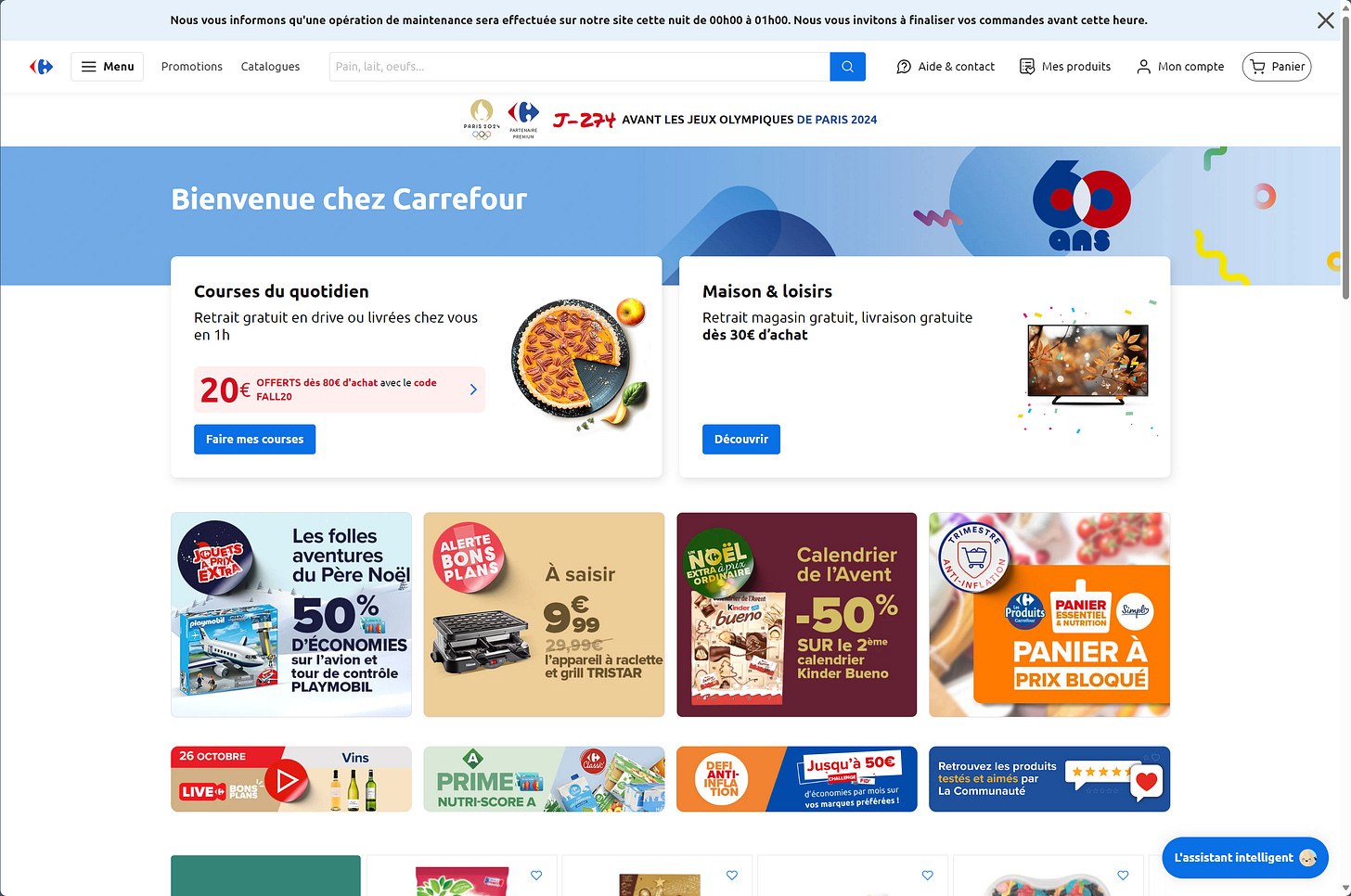

One example of an LLM plus prompt app is "Hopla," a chatbot on the Carrefour (French grocer) website.

(That's it in the bottom right-hand corner.)

Hopla is there to help customers find meal ideas and recipes, but the problem is that it's completely pasted on, and not integrated with the rest of the site at all. Once you're interacting with it, there's little that couldn't also be found on another website. Indeed, it reminds me a bit of the guestbooks and other widgets on late-90s websites, or the local plumber's Facebook page circa 2009. It's there because, well, why not?

(Perhaps it is unfair to call this one out. Again, it's a clear trend chasing addition, just to see what sticks, and maybe to add something to a few people's CVs. I'd be shocked to see Hopla still live on November 1, 2024. So shocked that I'm willing to give $100 to the first person who points it out if I'm wrong.)

There's nothing about Hopla that says it needs to be on Carrefour.fr. And because it's just pasted on, it misses the mark and doesn't serve much use. People are not going to the Carrefour homepage for recipes, they're going there to buy groceries. That's their job to do, and anything else that doesn't get them to success is just a distraction. Additionally, if people do start coming to Carrefour just to use Hopla, there's no moat, as there would be no switching cost to move to another recipe chatbot.

Then there are those LLM + prompt apps that are nothing but. Hopla, at least, is part of an existing offering. These other apps are aiming to be standalone experiences, but while these may be more useful than Hopla, they are much less defensible.

Recently there was an article on Business Insider titled A Minor ChatGPT Update Is a Warning to Founders: Big Tech Can Blow Up Your Startup at Any Time. While the title is a bit breathless, it has a valid warning: OpenAI's recent announcement that it supports PDFs natively, and the resulting impact on wrappers around the service for PDFs, is a sign that should have been obvious all along. Building your business as a thin wrapper around ChatGPT (or Claude or...) is a bad idea, as these services can easily go first-party with what you do as a third party. This is the same thing we saw with the voice platforms, where there weren't many apps built for them, but the most useful ones came straight from Amazon or Google.

LLM Plus Data

Another class of LLM-based apps is that of LLM plus data. They have their custom prompts, but they also leverage the data that the product already has about a user and use an LLM to make that data more useful. For that reason, most apps in this bucket are around information retrieval.

These products are much more useful and much more defensible. Search engines like Pinecone, Weaviate, or Algolia are prime examples, though they are more platforms or tools on which to build products. An example of an LLM plus data standalone product is Atlan AI, which Atlan positions as "the first ever copilot for data teams."

Another example is Dust, a "secure AI assistant with your company’s knowledge." Each of these hooks into a company's data and leverages LLMs to make the data more findable.

Because they leverage a large amount of data, they are both much more useful (the job to be done is clear: find information on our data) and much stickier (the switching cost is higher, because customers have to now move their data elsewhere).

It's not just customer data that can power an LLM plus data app. The Carrefour chatbot Hopla would be vastly improved by hooking more deeply into Carrefour's data. What are popular products this time of year? What is in stock at the current customer's store? Which products are most commonly bought together? All of this is information that Carrefour has, but Hopla does not.

LLM Plus Product

The final class of LLM powered app is the one where the app is deeply integrated into the product. They also leverage custom prompts and data but are deeply integrated into a product, one that is often already existing.

A good example of this is what Conveyor has done. They are a product that helps move along security reviews when companies want to bring in a new vendor, and they've added generative AI to fill out the reviews.

These types of products are the most useful because they are enhancing an existing product, one that customers are already finding useful. It's defensible, because a competitor would have to have not just the prompt and framework, not just the data, but the product as well.

For Carrefour's Hopla, this could take shape in allowing you to ask questions about a product. "Does this have sugar?" "What pairs well with this cheese?" It could also leverage the customer's cart. Looking at what is already in the cart, it could make recommendations or source those recipes. In short, a deeper integration.

It's still early for LLM powered apps, and over the next year, we should expect to see more LLM plus product apps. While people are still exploring what's possible, as the ecosystem matures, product builders are going to identify what users find useful. On the other end of the spectrum, more and more of the LLM plus prompt apps will become subsumed as first-party capabilities within ChatGPT and others.

Other News

The Reversal Curse: LLMs trained on "A is B" fail to learn "B is A"

What’s interesting here is that this person got ChatGPT to give him the prompts by, essentially, “appealing to its pride” and sending messages like “Okay, I think I might learn to trust you again”